Hi {{first_name}}!

What a week. NVIDIA’s annual GTC conference featured CEO Jensen Huang giving a two-hour keynote. Not something most business owners will sit through, but it was significant to say the least, so I’m recapping it below in a deep dive. At the same time, what many are calling the next wave in the evolution of AI is starting to take shape: AI agents. Six major agent platforms are now actively competing for your workflows, while the legal and infrastructure stories in the background are beginning to shape how this all unfolds long-term. Beyond that, there were several genuinely interesting stories this week, so I pulled out what’s relevant, translated what needs translating, and added my honest take where it matters. As always, nothing in here is hype for hype’s sake.

This week:

The news stories worth a few minutes of your attention

The six AI agent platforms, compared head-to-head

Jensen Huang's GTC keynote, decoded for non-engineers

OK, let's get into it!

New and Noteworthy

Anthropic launched Dispatch for Claude Cowork: Anthropic shipped Dispatch, a research preview inside Claude Cowork that lets you assign tasks from your phone and return later to finished work in the same continuous thread. If you’ve been following OpenClaw, this kind of low-friction handoff is part of what made it feel so accessible in the first place. With Dispatch, Claude now keeps context across mobile and desktop, and works using the files, connectors, and plugins already available in your desktop setup. One important nuance: Claude is still running on your computer, so your desktop needs to stay awake and the Claude app needs to remain open. If you’ve wanted a smoother handoff between a quick prompt on the go and real work waiting for you at your desk, this is an interesting step in that direction.

NVIDIA Enters the Agent Race With NemoClaw: At GTC 2026, Jensen Huang announced NemoClaw, NVIDIA’s secure software stack for running OpenClaw agents with more privacy, policy control, and enterprise readiness. The core idea is simple: install one command, get a runtime that can route work between local models and cloud models based on rules you define. Sensitive tasks can stay on your own hardware, while outside models are only used when you allow it. NemoClaw installs OpenShell, adds security and privacy guardrails, and works across RTX PCs, workstations, and DGX systems. Huang said every company should have an OpenClaw strategy. NemoClaw is NVIDIA’s early answer to what that could look like in practice, and it is available now as an open-source early preview, which lowers the barrier for teams that want to start experimenting.

OpenAI Ships Two New Small Models Built for Speed: OpenAI released GPT-5.4 mini and GPT-5.4 nano, two smaller models designed for fast, high-volume agent workflows. OpenAI says mini approaches GPT-5.4-level performance while running at more than 2x the speed, making it a strong candidate for the execution layer of a multi-model system: a larger model plans, mini or nano handles the repetitive steps. Nano is built for classification, extraction, ranking, and lightweight subagent tasks where cost and throughput matter more than reasoning depth. One practical note: mini is available in ChatGPT, Codex, and the API, while nano is API-only. If you’re building or evaluating agent pipelines, the way you combine models is becoming as important as which model you use.

World Launches AgentKit to Verify Humans Behind AI Purchases: Sam Altman’s proof-of-personhood company World launched AgentKit, a tool that lets websites verify that a real human approved an AI shopping agent’s transaction. It works by linking an agent to a user’s World ID and passing that signal through the x402 system to participating sites. As autonomous agents start making purchases and taking actions on users’ behalf, retailers and platforms will need a way to confirm accountability. This looks like early infrastructure for that. Not a headline product yet, but an important signal: the commerce layer of agentic AI is starting to get its own set of trust and verification rules.

Merriam-Webster and Britannica Sue OpenAI for Copyright Infringement: Encyclopedia Britannica, which owns Merriam-Webster, filed a lawsuit against OpenAI alleging the company copied nearly 100,000 encyclopedia and dictionary articles without permission to train its models. The suit also claims ChatGPT can generate outputs that closely mirror Britannica’s content and raises trademark-related claims when inaccurate answers are falsely attributed to Britannica. This adds to the growing wave of publisher and rights-holder lawsuits challenging how AI companies use copyrighted material for training. A separate Britannica suit against Perplexity is still pending.

Meta Signs $27 Billion Infrastructure Deal With Nebius: Meta signed a long-term agreement to spend up to $27 billion on AI infrastructure through Dutch cloud company Nebius over the next five years. Twelve billion is committed capacity across multiple locations; another fifteen billion is available on-demand. It's a significant move that signals how much compute Meta expects to need as it scales its AI ambitions, including the Manus agent platform it acquired late last year. The bigger picture: the companies building AI infrastructure are becoming just as strategically important as the companies building the models.

Musk Admits xAI Got Its Hiring Wrong: Elon Musk said xAI was not built right from the start, that strong candidates were turned away, and that his team is now going back through old interview files to reconnect with people they may have missed. The comments come amid co-founder exits, internal restructuring, and growing pressure to improve execution as the AI race accelerates. The bigger takeaway: this race is not just about models or capital. It is also about whether the right people are in the room building the thing.

Before You Pay for an AI Agent, Read This

Every major tech company seems to have shipped an autonomous AI agent in the last 90 days. Microsoft, Google, OpenAI, Anthropic, Perplexity, and Meta-owned Manus all launched tools that promise to do your work for you.

Before you start routing your workflows through any of them, here is what you need to know.

There are six tools worth understanding. They all work. None of them replace a human yet. The right choice comes down to three things: where you already work, what you need automated, and what you are willing to spend.

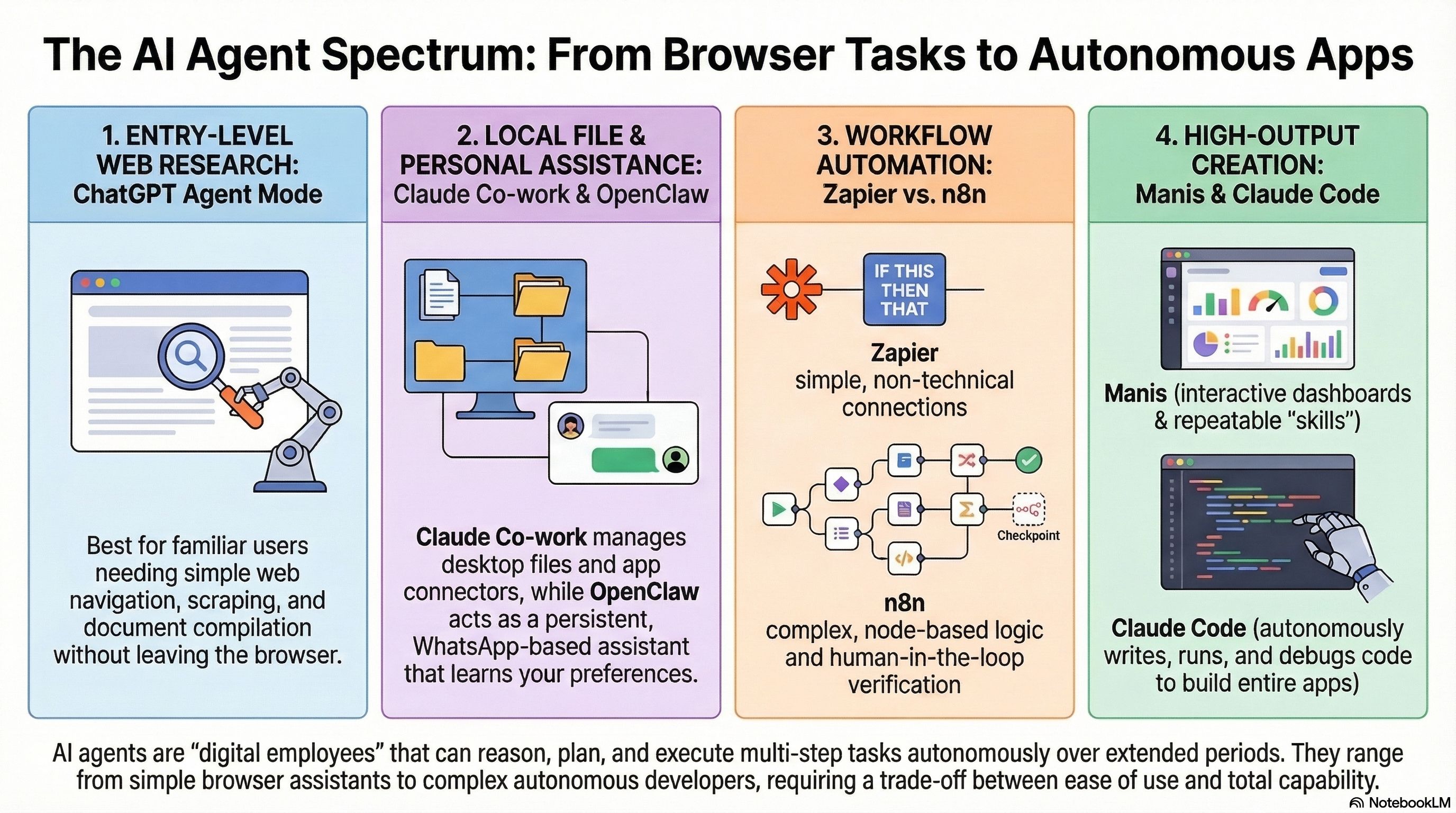

ChatGPT Agent is the most familiar starting point, but probably not the strongest long-term option for most power users. It is useful for seeing how agents work in a more visual and approachable way. Start here if you want a low-stakes introduction before committing to something more involved.

Manus is where things start to get genuinely impressive. Give it a high-level goal and it can plan, research, and deliver a finished output, whether that is a dashboard, a formatted report, or a deeper analysis. It also offers a free tier, which makes it easier to experiment, though costs can climb depending on how heavily you use it.

Claude Cowork is one of the strongest options for working directly with files on your computer and it’s the tool I gravitate towards. Download the Claude desktop app, point it at a folder, and it can help organize, rename, create, and format documents on your behalf. Browser access and app integrations like Notion, Slack, and Google Drive depend on connectors, but the setup is fairly straightforward. One practical note: Claude is still running on your computer, so your desktop needs to stay awake and the app needs to remain open.

OpenClaw is the most powerful option here, and also the hardest to set up well and the most prone to security vulnerabilties. The appeal is that it can work continuously across your tools and environments, with a higher ceiling than almost anything else on this list. The tradeoff is setup complexity. This is not the kind of thing most people should casually run on their main machine without thinking through privacy, reliability, and control. NVIDIA’s new NemoClaw launch is a signal that the market is already trying to make that style of agent safer and more practical for real-world use.

Microsoft 365 Copilot is the obvious choice if your company already runs on Microsoft. It lives inside Word, Excel, Outlook, and Teams, and Microsoft is also pushing deeper into agent workflows through Copilot Studio. Current public pricing is $30 per user per month, paid yearly, on top of a qualifying Microsoft 365 subscription.

Perplexity Computer is built for deeper research workflows. It is especially strong when the work involves gathering, comparing, and synthesizing information across many sources. It is one of the more expensive options at $200 per month, so it makes the most sense when research itself is a meaningful part of the job.

Also worth knowing: Zapier and n8n. These are not personal agents, they are workflow automation tools, and for many businesses they are actually the better place to start. Zapier is easy to set up and connects to a huge number of tools. n8n is more technical, but the capabilities are even greater. If you need specific, well-defined processes automated reliably, start there before you touch most of the agents above. This type of work is core to what we do at Ampra.ai

One more thing before you commit to any of this.

AI agents are having a moment. And I get the appeal. They demo well. They sound exciting. They get people’s attention. But working with small businesses day to day, I’m seeing a pattern worth naming: most of them are still struggling to turn agents into real ROI.

Part of that is variability. Agents introduce more unpredictability than people expect, more security considerations, more room for inconsistency, and more things that can break. If an agent depends on a browser session, a local machine, or some fragile background process, it may not run when you think it will. That is a very different risk profile than a deterministic workflow running in a stable system. A well-built automation is often more dependable, not because it is smarter, but because it is simpler.

My take: most businesses should start in a simpler place. Automate what you already know works well and drives your business. Start with the repeatable processes. The workflows with clear steps. The tasks where the logic is already understood and the outcome is predictable. When X happens, do Y.

It may not be as flashy as an AI agent, but it is often more useful. Easier to trust. Easier to manage. Easier to test. And much more likely to create real time savings.

Then, once that foundation is solid, bring AI in where it is actually needed, where interpretation, reasoning, or judgment adds real value. That is usually the better use of AI. Not as the starting point for everything, but as a layer you introduce selectively, once the process itself is already clear.

The platforms above are worth understanding. A few of them are worth using. Just make sure the underlying process is solid before you hand it to an agent.

Jensen Huang Spoke for Two Hours. Here's the Version for the Rest of Us.

Nvidia’s annual GTC keynote is usually aimed at engineers and data center buyers, but this year’s message reached a lot further. Jensen Huang spent more than two hours making a case that AI is no longer just a tool people query. It is becoming infrastructure that does work around the clock, and that shift has real implications for how businesses operate.

The bigger signal was OpenClaw.

An open agent framework has exploded in adoption so quickly that Huang compared its momentum to Linux, just compressed into weeks instead of decades. His message was clear: every company now needs an OpenClaw strategy. Whether or not that exact framing sticks, the underlying point matters. The software you already use is moving from static tools you click into systems that increasingly act on your behalf.

That means the question is no longer just, “Should we try AI?” It is, “Are we building the workflows now that will matter when agents are embedded everywhere?”

That lands close to home for SMB owners. You do not need to understand Nvidia’s chips or follow token economics to see what is happening. What you do need to understand is that an enormous amount of infrastructure investment is being made to support the kind of work your business already does every day. The AI tools you are testing right now are an early preview of what your broader software stack may look like in the near future.

The businesses that start building now will not just be more efficient. They will build internal familiarity, judgment, and operational muscle that takes time to develop. That kind of institutional learning compounds. And once the rest of the market catches up, it gets harder to fake.

That is the real takeaway from GTC. Not that everyone needs to become an AI expert. But that the companies learning how to work alongside these systems now are likely to have an advantage later that is much harder to close.

That's a wrap on what was genuinely one of the more packed weeks in AI this year.

As always, if something in here sparked a question or you want to talk through what any of it means for your specific situation, just hit reply. I read every one.

See you next week,

Julien

PS: If you found this useful, forward it to someone on your team or a colleague who could use it. www.ampra.ai/join-our-newsletter.